Jul 08, 2025·8 min

Dijkstra & Structured Programming: Discipline That Scales

Edsger Dijkstra’s structured programming ideas explain why disciplined, simple code stays correct and maintainable as teams, features, and systems grow.

Why Dijkstra Still Matters When Software Grows

Software rarely fails because it can’t be written. It fails because, a year later, nobody can change it safely.

As codebases grow, every “small” tweak starts to ripple: a bug fix breaks a distant feature, a new requirement forces rewrites, and a simple refactor turns into a week of careful coordination. The hard part isn’t adding code—it’s keeping behavior predictable while everything around it changes.

The promise: correctness and simplicity lower long-term cost

Edsger Dijkstra argued that correctness and simplicity should be first-class goals, not nice-to-haves. The payoff isn’t academic. When a system is easier to reason about, teams spend less time firefighting and more time building.

- Correctness reduces the cost of mistakes: fewer incidents, fewer regressions, less “don’t touch that” code.

- Simplicity reduces the cost of change: fewer hidden assumptions, fewer special cases, fewer surprises during reviews.

What “scale” actually means

When people say software needs to “scale,” they often mean performance. Dijkstra’s point is different: complexity scales too.

Scale shows up as:

- More features: new flows, edge cases, and user expectations.

- More people: handoffs, differing styles, and varying context.

- More integrations: external APIs, data sources, and failure modes.

- More time: legacy decisions, shifting requirements, and partial rewrites.

The core idea: structure makes behavior easier to predict

Structured programming is not about being strict for its own sake. It’s about choosing control flow and decomposition that make it easy to answer two questions:

- “What happens next?”

- “Under what conditions?”

When behavior is predictable, change becomes routine instead of risky. That’s why Dijkstra still matters: his discipline targets the real bottleneck of growing software—understanding it well enough to improve it.

A Simple Introduction to Edsger Dijkstra and His Goal

Edsger W. Dijkstra (1930–2002) was a Dutch computer scientist who helped shape how programmers think about building reliable software. He worked on early operating systems, contributed to algorithms (including the shortest-path algorithm that carries his name), and—most importantly for everyday developers—pushed the idea that programming should be something we can reason about, not just something we try until it seems to work.

His core focus: reasoning over “it runs on my machine”

Dijkstra cared less about whether a program could be made to produce the right output for a few examples, and more about whether we could explain why it is correct for the cases that matter.

If you can state what a piece of code is supposed to do, you should be able to argue (step by step) that it actually does it. That mindset naturally leads to code that’s easier to follow, easier to review, and less dependent on heroic debugging.

Why he can sound strict—and why that helps

Some of Dijkstra’s writing reads uncompromising. He criticized “clever” tricks, sloppy control flow, and coding habits that make reasoning difficult. The strictness isn’t about style policing; it’s about reducing ambiguity. When the code’s meaning is clear, you spend less time debating intentions and more time validating behavior.

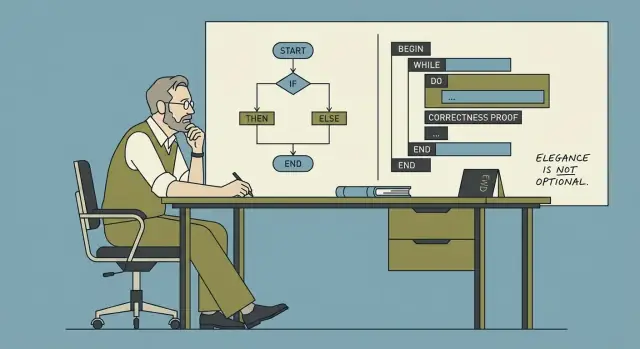

What “structured programming” means (high level)

Structured programming is the practice of building programs from a small set of clear control structures—sequence, selection (if/else), and iteration (loops)—instead of tangled jumps in flow. The goal is simple: make the path through the program understandable so you can explain, maintain, and change it with confidence.

Correctness: The Hidden Feature Your Users Rely On

People often describe software quality as “fast,” “beautiful,” or “feature-rich.” Users experience correctness differently: as the quiet confidence that the app won’t surprise them. When correctness is present, nobody notices. When it’s missing, everything else stops mattering.

“It works now” vs “it keeps working”

“It works now” usually means you tried a few paths and got the expected result. “It keeps working” means it behaves as intended across time, edge cases, and changes—after refactors, new integrations, higher traffic, and new team members touching the code.

A feature can “work now” while still being fragile:

- It depends on input always being clean.

- It assumes a network call always returns quickly.

- It passes tests that only cover the happy path.

Correctness is about removing these hidden assumptions—or making them explicit.

How small bugs multiply in large systems

A minor bug rarely stays minor once software grows. One incorrect state, one off-by-one boundary, or one unclear error-handling rule gets copied into new modules, wrapped by other services, cached, retried, or “worked around.” Over time, teams stop asking “what’s true?” and start asking “what usually happens?” That’s when incident response turns into archaeology.

The multiplier is dependency: a small misbehavior becomes many downstream misbehaviors, each with its own partial fix.

Clarity is a correctness tool (not just a style choice)

Clear code improves correctness because it improves communication:

- Code reviews catch real issues when intent is obvious.

- Onboarding is faster when rules are readable, not tribal knowledge.

- Incidents resolve sooner when control flow and failure modes are easy to trace.

A practical definition of correctness for product teams

Correctness means: for the inputs and situations we claim to support, the system consistently produces the outcomes we promise—while failing in predictable, explainable ways when it can’t.

Simplicity as a Strategy, Not a Style Preference

Simplicity isn’t about making code “cute,” minimal, or clever. It’s about making behavior easy to predict, explain, and modify without fear. Dijkstra valued simplicity because it improves our ability to reason about programs—especially when the codebase and the team grow.

What simplicity is (and what it is not)

Simple code keeps a small number of ideas in motion at once: clear data flow, clear control flow, and clear responsibilities. It doesn’t force the reader to simulate many alternate paths in their head.

Simplicity is not:

- Fewer lines at any cost

- “Smart” tricks, dense one-liners, or heavy abstraction

- Avoiding structure to look flexible

Accidental complexity: what you didn’t mean to add

Many systems become hard to change not because the domain is inherently complex, but because we introduce accidental complexity: flags that interact in unexpected ways, special-case patches that never get removed, and layers that exist mostly to work around earlier decisions.

Each extra exception is a tax on understanding. The cost shows up later, when someone tries to fix a bug and discovers that a change in one area subtly breaks several others.

Simple designs reduce the need for heroics

When a design is simple, progress comes from steady work: reviewable changes, smaller diffs, and fewer emergency fixes. Teams don’t need “hero” developers who remember every historical edge case or can debug under pressure at 2 a.m. Instead, the system supports normal human attention spans.

Rule of thumb: fewer special cases, fewer surprises

A practical test: if you keep adding exceptions (“unless…”, “except when…”, “only for this customer…”), you’re probably accumulating accidental complexity. Prefer solutions that reduce branching in behavior—one consistent rule beats five special cases, even if the consistent rule is slightly more general than you first imagined.

Structured Programming: Clear Control Flow You Can Trust

Structured programming is a simple idea with big consequences: write code so its execution path is easy to follow. In plain terms, most programs can be built from three building blocks—sequence, selection, and repetition—without relying on tangled jumps.

The three building blocks (in human terms)

- Sequence: do step A, then step B, then step C.

- Selection: choose a path based on a condition (e.g.,

if/else,switch). - Repetition: repeat a set of steps while a condition holds (e.g.,

for,while).

When control flow is composed from these structures, you can usually explain what the program does by reading top-to-bottom, without “teleporting” around the file.

What it replaced: spaghetti execution paths

Before structured programming became the norm, many codebases leaned heavily on arbitrary jumps (classic goto-style control flow). The problem wasn’t that jumps are always evil; it’s that unrestricted jumping creates execution paths that are hard to predict. You end up asking questions like “How did we get here?” and “What state is this variable in?”—and the code doesn’t answer clearly.

Why clarity matters for real teams

Clear control flow helps humans build a correct mental model. That model is what you rely on when debugging, reviewing a pull request, or changing behavior under time pressure.

When structure is consistent, modification becomes safer: you can change one branch without accidentally affecting another, or refactor a loop without missing a hidden exit path. Readability isn’t just aesthetics—it’s the foundation for changing behavior confidently without breaking what already works.

Reasoning Tools: Invariants, Preconditions, and Postconditions

Define backend contracts fast

Generate a Go and PostgreSQL backend with explicit inputs, outputs, and error behavior.

Dijkstra pushed a simple idea: if you can explain why your code is correct, you can change it with less fear. Three small reasoning tools make that practical—without turning your team into mathematicians.

Invariants: “facts that stay true”

An invariant is a fact that stays true while a piece of code runs, especially inside a loop.

Example: you’re summing prices in a cart. A useful invariant is: “total equals the sum of all items processed so far.” If that stays true at every step, then when the loop ends, the result is trustworthy.

Invariants are powerful because they focus your attention on what must never break, not just what should happen next.

Preconditions and postconditions: everyday contracts

A precondition is what must be true before a function runs. A postcondition is what the function guarantees after it finishes.

Everyday examples:

- Precondition: “You can only withdraw money if your account has enough funds.”

- Postcondition: “After withdrawal, the balance is reduced by exactly that amount, and never becomes negative.”

In code, a precondition might be “input list is sorted,” and the postcondition might be “output list is sorted and contains the same elements plus the inserted one.”

How writing them down changes coding and reviews

When you write these down (even informally), design gets sharper: you decide what a function expects and promises, and you naturally make it smaller and more focused.

In reviews, it shifts debate away from style (“I’d write it differently”) toward correctness (“Does this code maintain the invariant?” “Do we enforce the precondition or document it?”).

Lightweight practice: comment where bugs cluster

You don’t need formal proofs to benefit. Pick the buggiest loop or the trickiest state update and add a one-line invariant comment above it. When someone edits the code later, that comment acts like a guardrail: if a change breaks this fact, the code is no longer safe.

Testing vs Reasoning: What Each Can and Can’t Guarantee

Testing and reasoning aim at the same outcome—software that behaves as intended—but they work very differently. Tests discover problems by trying examples. Reasoning prevents whole categories of problems by making the logic explicit and checkable.

What tests are great at

Tests are a practical safety net. They catch regressions, verify real-world scenarios, and document expected behavior in a way the whole team can run.

But tests can only show the presence of bugs, not their absence. No test suite covers every input, every timing variation, or every interaction between features. Many “works on my machine” failures come from untested combinations: a rare input, a specific order of operations, or a subtle state that only appears after several steps.

What reasoning can guarantee (and what it can’t)

Reasoning is about proving properties of the code: “this loop always terminates,” “this variable is never negative,” “this function never returns an invalid object.” When done well, it rules out entire classes of defects—especially around boundaries and edge cases.

The limitation is effort and scope. Full formal proofs for an entire product are rarely economical. Reasoning works best when applied selectively: core algorithms, security-sensitive flows, money or billing logic, and concurrency.

A balanced approach that scales

Use tests broadly, and apply deeper reasoning where failure is expensive.

A practical bridge between the two is to make intent executable:

- Assertions for internal assumptions (e.g., “index is in range”).

- Preconditions and postconditions (contracts) for function inputs/outputs.

- Invariants for persistent truths (e.g., “cart total equals sum of items”).

These techniques don’t replace tests—they tighten the net. They turn vague expectations into checkable rules, making bugs harder to write and easier to diagnose.

Discipline: How Teams Avoid “Cleverness Debt”

“Clever” code often feels like a win in the moment: fewer lines, a neat trick, a one-liner that makes you feel smart. The problem is that cleverness doesn’t scale across time or across people. Six months later, the author forgets the trick. A new teammate reads it literally, misses the hidden assumption, and changes it in a way that breaks behavior. That’s “cleverness debt”: short-term speed bought with long-term confusion.

Discipline is a team accelerator

Dijkstra’s point wasn’t “write boring code” as a style preference—it was that disciplined constraints make programs easier to reason about. On a team, constraints also reduce decision fatigue. If everyone already knows the defaults (how to name things, how to structure functions, what “done” looks like), you stop re-litigating basics in every pull request. That time goes back into product work.

Discipline shows up in routine practices:

- Code reviews that reward clarity over novelty (“Could someone else change this safely?”).

- Shared standards (formatting, naming, error handling) so the codebase reads like one voice.

- Refactoring as maintenance, not as a rescue mission—small cleanups kept continuous.

What “disciplined” looks like in code

A few concrete habits prevent cleverness debt from accumulating:

- Small functions that do one job, with obvious inputs and outputs.

- Clear names that explain intent (prefer

calculate_total()overdo_it()). - No hidden state: minimize globals and surprising side effects; pass dependencies explicitly.

- Straight control flow: avoid logic that relies on subtle ordering, magic values, or “it works if you know the trick.”

Discipline isn’t about perfection—it’s about making the next change predictable.

Modularity and Boundaries: Keeping Change Local

Add invariants as guardrails

Ask Koder.ai for invariants and preconditions around your trickiest loop or state update.

Modularity isn’t just “splitting code into files.” It’s isolating decisions behind clear boundaries, so the rest of the system doesn’t need to know (or care) about internal details. A module hides the messy parts—data structures, edge cases, performance tricks—while exposing a small, stable surface.

How modules shrink the blast radius

When a change request arrives, the ideal outcome is: one module changes, and everything else stays untouched. That’s the practical meaning of “keeping change local.” Boundaries prevent accidental coupling—where updating one feature silently breaks three others because they shared assumptions.

A good boundary also makes reasoning easier. If you can state what a module guarantees, you can reason about the larger program without re-reading its entire implementation every time.

Interfaces as promises (and how they enable parallel work)

An interface is a promise: “Given these inputs, I will produce these outputs and maintain these rules.” When that promise is clear, teams can work in parallel:

- One person can implement the module.

- Another can build a caller using the interface.

- QA can design tests around the promised behavior.

This isn’t about bureaucracy—it’s about creating safe coordination points in a growing codebase.

Simple module checks that prevent drift

You don’t need a grand architecture review to improve modularity. Try these lightweight checks:

- Inputs/outputs: Can you list the module’s inputs, outputs, and side effects in a few lines? If not, it’s probably doing too much.

- Ownership: Who is responsible for its behavior and changes? Unowned modules turn into dumping grounds.

- Dependencies: Does it depend on “everything,” or only what it truly needs? Fewer dependencies mean fewer surprise breakages.

Well-drawn boundaries turn “change” from a system-wide event into a localized edit.

Why These Ideas Win at Scale (Teams, Codebases, and Time)

When software is small, you can “keep it all in your head.” At scale, that stops being true—and the failure modes become familiar.

Common symptoms look like this:

- Outages that trace back to one surprising corner case

- Releases that slow down because every change feels risky

- Fragile integrations where a minor update breaks three downstream systems

Structure lowers cognitive load

Dijkstra’s core bet was that humans are the bottleneck. Clear control flow, small well-defined units, and code you can reason about aren’t aesthetic choices—they’re capacity multipliers.

In a large codebase, structure acts like compression for understanding. If functions have explicit inputs/outputs, modules have boundaries you can name, and the “happy path” isn’t tangled with every edge case, developers spend less time reconstructing intent and more time making deliberate changes.

It scales with teams, not just code

As teams grow, communication costs rise faster than line counts. Disciplined, readable code reduces the amount of tribal knowledge required to contribute safely.

That shows up immediately in onboarding: new engineers can follow predictable patterns, learn a small set of conventions, and make changes without needing a long tour of “gotchas.” The code itself teaches the system.

Incidents get simpler to debug—and safer to undo

During an incident, time is scarce and confidence is fragile. Code written with explicit assumptions (preconditions), meaningful checks, and straightforward control flow is easier to trace under pressure.

Just as importantly, disciplined changes are easier to roll back. Smaller, localized edits with clear boundaries reduce the chance that a rollback triggers new failures. The result isn’t perfection—it’s fewer surprises, faster recovery, and a system that stays maintainable as years and contributors accumulate.

Applying Dijkstra Without Being Dogmatic

Build the disciplined first version

Choose web, server, or Flutter mobile and build the first disciplined version in chat.

Dijkstra’s point wasn’t “write code the old way.” It was “write code you can explain.” You can adopt that mindset without turning every feature into a formal proof exercise.

Turn principles into daily habits

Start with choices that make reasoning cheap:

- Prefer simple control flow: a few small functions over one multi-branch “do everything” routine.

- Reduce side effects: keep mutation close to where it’s needed, and don’t let functions quietly change global state.

- Use clear contracts: make inputs, outputs, and error behavior explicit (in types, names, and comments).

A good heuristic: if you can’t summarize what a function guarantees in one sentence, it’s probably doing too much.

Small “structure upgrades” (no rewrites)

You don’t need a big refactor sprint. Add structure at the seams:

- Extract a complex loop into a named function and define what remains true each iteration.

- Replace “magic” conditionals with well-named predicates (e.g.,

isEligibleForRefund). - Encapsulate a tricky state transition behind a single function so the rest of the codebase can’t misuse it.

These upgrades are incremental: they lower the cognitive load for the next change.

Code review prompts that keep you honest

When reviewing (or writing) a change, ask:

- “What must be true here?” (invariants, assumptions, required state)

- “What can change safely?” (which parts are allowed to vary without breaking callers)

If reviewers can’t answer quickly, the code is signaling hidden dependencies.

Document the reasoning, not just the steps

Comments that restate the code go stale. Instead, write why the code is correct: the key assumptions, edge cases you’re guarding, and what would break if those assumptions change. A short note like “Invariant: total is always the sum of processed items” can be more valuable than a paragraph of narration.

If you want a lightweight place to capture these habits, collect them into a shared checklist (see /blog/practical-checklist-for-disciplined-code).

Where AI-Assisted Building Fits (Without Losing the Discipline)

Modern teams increasingly use AI to accelerate delivery. The risk is familiar: speed today can turn into confusion tomorrow if the generated code is hard to explain.

A Dijkstra-friendly way to use AI is to treat it as an accelerator for structured thinking, not a replacement for it. For example, when building in Koder.ai—a vibe-coding platform where you create web, backend, and mobile apps through chat—you can keep the “reasoning first” habits by making your prompts and review steps explicit:

- Ask for clear contracts: “Define preconditions, postconditions, and error behavior for this endpoint.”

- Ask for invariants in stateful flows: “What must always be true after each step of this checkout state machine?”

- Use planning mode to force decomposition into small, reviewable pieces (modules, interfaces, responsibilities) before generating implementation details.

- Lean on snapshots and rollback to keep changes small and reversible—mirroring the discipline of localized edits and safe undo paths.

Even if you eventually export the source code and run it elsewhere, the same principle applies: generated code should be code you can explain.

A Practical Checklist for Correct, Simple, Disciplined Code

This is a lightweight “Dijkstra-friendly” checklist you can use during reviews, refactors, or before merging. It’s not about writing proofs all day—it’s about making correctness and clarity the default.

Quick self-check (new code and refactors)

- Can I explain the code to a teammate in 60 seconds? If the explanation requires lots of “just trust me,” simplify.

- Is the control flow obvious? Prefer straight-line code; keep loops and conditionals small; avoid hidden exits and deeply nested branches.

- What are the preconditions and postconditions? Write them down in a comment, docstring, or function name. If you can’t state them, the function is probably doing too much.

- Does each function have one job and a clear boundary? Inputs in, outputs out—minimal reliance on global state.

- What invariant keeps this loop honest? Even a one-line note like “

totalalways equals sum of processed items” prevents subtle bugs. - Are there fewer “clever” tricks than necessary? If the code needs a tour guide, it’s accruing cleverness debt.

What to measure qualitatively

- Ease of explanation: Can someone unfamiliar with the module tell you what it does and why it’s correct?

- Ease of testing: Are edge cases naturally testable, or do you need elaborate setup and mocking?

- Change risk: When requirements shift, can you predict what breaks? If every change feels scary, boundaries are leaking.

A practical next step

Pick one messy module and restructure control flow first:

- Extract small functions with clear names.

- Replace tangled branches with simpler, explicit cases.

- Move special cases to the edges (input validation, early returns).

Then add a few focused tests around the new boundaries. If you want more patterns like this, browse related posts at /blog.

FAQ

Why does Dijkstra still matter for modern software teams?

Because as codebases grow, the main bottleneck becomes understanding—not typing. Dijkstra’s emphasis on predictable control flow, clear contracts, and correctness reduces the risk that a “small change” causes surprising behavior elsewhere, which is exactly what slows teams down over time.

What does “scale” mean in this post—performance or something else?

In this context, “scale” is less about performance and more about complexity multiplying:

- more features and edge cases

- more contributors and handoffs

- more integrations and failure modes

- more time and legacy decisions

These forces make reasoning and predictability more valuable than cleverness.

What is structured programming, in practical terms?

Structured programming favors a small set of clear control structures:

- sequence (do A then B)

- selection (

if/else,switch) - repetition (

for,while)

The goal isn’t rigidity—it’s making execution paths easy to follow so you can explain behavior, review changes, and debug without “teleporting” through the code.

Why is spaghetti control flow (like unrestricted `goto`) such a maintenance problem?

The problem is unrestricted jumping that creates hard-to-predict paths and unclear state. When control flow becomes tangled, developers waste time answering basic questions like “How did we get here?” and “What state is valid right now?”

Modern equivalents include deeply nested branching, scattered early exits, and implicit state changes that make behavior hard to trace.

What’s a practical definition of correctness for product software?

Correctness is the “quiet feature” users rely on: the system consistently does what it promises and fails in predictable, explainable ways when it can’t. It’s the difference between “it works in a few examples” and “it keeps working after refactors, integrations, and edge cases show up.”

Why do small bugs become expensive in large systems?

Because dependencies amplify errors. A small incorrect state or boundary bug gets copied, cached, retried, wrapped, and “worked around” across modules and services. Over time, teams stop asking “what’s true?” and start relying on “what usually happens,” which makes incidents harder and changes riskier.

What does “simplicity” mean here (and what doesn’t it mean)?

Simplicity is about few ideas in motion at once: clear responsibilities, clear data flow, and minimal special cases. It’s not about fewer lines or clever one-liners.

A good test is whether behavior remains easy to predict when requirements change. If every new case adds “unless…” rules, you’re accumulating accidental complexity.

How do invariants help in everyday code without doing formal proofs?

An invariant is a fact that should remain true throughout a loop or state transition. A lightweight way to use it:

- write a one-line comment above the loop (e.g., “

totalequals the sum of processed items”) - update the code until you can honestly keep that statement true at each iteration

- add an assertion if it’s cheap and valuable

This makes later edits safer because the next person knows what must not break.

How should teams balance testing vs reasoning?

Testing finds bugs by trying examples; reasoning prevents whole categories of bugs by making logic explicit. Tests can’t prove absence of defects because they can’t cover every input or timing. Reasoning is especially valuable for high-cost failure areas (money, security, concurrency).

A practical blend is: broad tests + targeted assertions + clear preconditions/postconditions around critical logic.

What are a few incremental ways to apply Dijkstra’s ideas without being dogmatic?

Start with small, repeatable moves that reduce cognitive load:

- extract small functions and state each function’s inputs/outputs

- replace “magic” conditionals with well-named predicates

- encapsulate tricky state changes behind a single boundary

- add short comments that capture why it’s correct (invariants, assumptions), not what the code literally does

These are incremental “structure upgrades” that make the next change cheaper without requiring a rewrite.