Aug 09, 2025·8 min

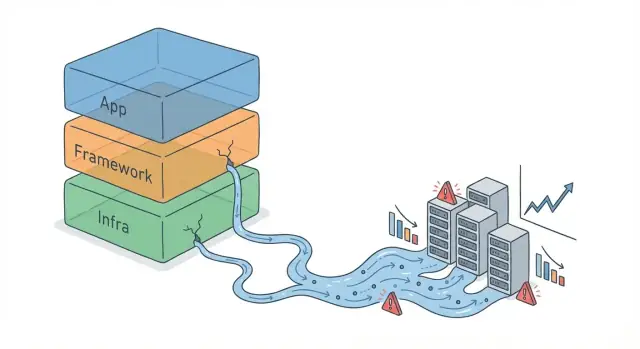

How Framework Abstractions Leak When Systems Scale Up

Learn why high-level frameworks break down at scale, the most common leak patterns, the symptoms to watch for, and practical design and ops fixes.

What “Abstraction Leaks” Means Under Scale

An abstraction is a simplifying layer: a framework API, an ORM, a message queue client, even a “one-line” caching helper. It lets you think in higher-level concepts (“save this object,” “send this event”) without constantly handling the lower-level mechanics.

An abstraction leak happens when those hidden details start affecting real outcomes anyway—so you’re forced to understand and manage what the abstraction tried to hide. The code still “works,” but the simplified model no longer predicts real behavior.

Why leaks stay invisible early on

Early growth is forgiving. With low traffic and small datasets, inefficiencies hide behind spare CPU, empty caches, and fast queries. Latency spikes are rare, retries don’t pile up, and a slightly wasteful log line doesn’t matter.

As volume increases, the same shortcuts can amplify:

- More requests turn tiny overhead into a steady bottleneck.

- Bigger tables make “convenient” queries expensive.

- More services increase the chance that timeouts, retries, and partial failures chain together.

Leaks aren’t just about speed

Leaky abstractions usually show up in three areas:

- Performance: slow queries, thread exhaustion, excessive serialization, unexpected N+1 calls.

- Reliability: retry storms, queue buildup, timeouts that trigger cascading failures.

- Cost: higher cloud bills from chatty services, over-logging, inefficient caching, and avoidable storage/network usage.

What to expect in this guide

Next, we’ll focus on practical signals that an abstraction is leaking, how to diagnose the underlying cause (not just symptoms), and mitigation options—from configuration tweaks to deliberately “dropping down a level” when the abstraction no longer matches your scale.

Why Scale Changes the Rules

A lot of software follows the same arc: a prototype proves the idea, a product ships, then usage grows faster than the original architecture. Early on, frameworks feel magical because their defaults let you move quickly—routing, database access, logging, retries, and background jobs “for free.”

At scale, you still want those benefits—but the defaults and convenience APIs start behaving like assumptions.

Defaults are tuned for “normal” workloads

Framework defaults usually assume:

- modest data size

- steady traffic

- limited concurrency

- predictable execution time

Those assumptions hold early, so the abstraction looks clean. But scale changes what “normal” means. A query that’s fine at 10,000 rows becomes slow at 100 million. A synchronous handler that felt simple starts timing out when traffic spikes. A retry policy that smoothed over occasional failures can amplify outages when thousands of clients retry at once.

Volume, bursts, and concurrency expose hidden costs

Scale isn’t just “more users.” It’s higher data volume, bursty traffic, and more concurrent work happening at the same time. These push on the parts abstractions hide: connection pools, thread scheduling, queue depth, memory pressure, I/O limits, and rate limits from dependencies.

Frameworks often pick safe, generic settings (pool sizes, timeouts, batching behavior). Under load, those settings can translate into contention, long-tail latency, and cascading failures—problems that weren’t visible when everything fit comfortably within margins.

Production isn’t staging with extra traffic

Staging environments rarely mirror production conditions: smaller datasets, fewer services, different caching behavior, and less “messy” user activity. In production you also have real network variability, noisy neighbors, rolling deploys, and partial failures. That’s why abstractions that seemed airtight in tests can start leaking once real-world conditions apply pressure.

Common Signals That an Abstraction Is Leaking

When a framework abstraction leaks, the symptoms rarely show up as a neat error message. Instead, you see patterns: behavior that was fine at low traffic becomes unpredictable or expensive at higher volume.

Typical performance symptoms

A leaking abstraction often announces itself through user-visible latency:

- Endpoints that get slower in a non-linear way (p95/p99 explode while averages look “okay”)

- Timeouts that start appearing only under peak load

- Queue buildup (background jobs, message consumers, thread pools) where work arrives faster than it can be processed

- Sudden throughput ceilings: you add instances, but requests per second barely improves

These are classic signs that the abstraction is hiding a bottleneck you can’t relieve without dropping a level (e.g., inspecting actual queries, connection usage, or I/O behavior).

Cost symptoms that look like “mystery bills”

Some leaks show up first in invoices rather than dashboards:

- Database CPU spikes or rising IOPS without an obvious feature launch

- Cache thrash: hit rate swings wildly, evictions climb, or hot keys dominate

- Egress fees that jump because a “convenient” middleware or proxy path causes unexpected cross-zone/region traffic

- More nodes needed just to hold the same load, because overhead (serialization, logging, retries) grows with volume

If scaling up infrastructure doesn’t restore performance proportionally, it’s often not raw capacity—it’s overhead you didn’t realize you were paying.

Reliability symptoms (the scary ones)

Leaks become reliability problems when they interact with retries and dependency chains:

- Cascading failures: one slow dependency triggers timeouts upstream, which triggers more load elsewhere

- Retries amplify load: a timeout causes clients/workers to retry, doubling or tripling pressure on the weakest component

- Circuit breakers and rate limits “randomly” firing because latency variance increases

- Incidents that start as “just slower” and end as partial outages

Quick checklist: leak or underprovisioning?

Use this to sanity-check before you buy more capacity:

- Does performance improve linearly when you double resources? If not, suspect a leak.

- Are p95/p99 latency and error rates worsening while CPU on app servers stays moderate? Often a hidden dependency bottleneck.

- Do you see disproportionate database/cache/network growth relative to request volume? Likely the abstraction is generating extra work.

- Do retries/queues correlate with spikes (load creates more load)? That’s usually a leak interacting with failure handling.

If symptoms concentrate in one dependency (DB, cache, network) and don’t respond predictably to “more servers,” it’s a strong indicator you need to look beneath the abstraction.

Database Abstractions: ORMs, Queries, and Hidden Costs

ORMs are great at removing boilerplate, but they also make it easy to forget that every object eventually becomes a SQL query. At small scale, that trade-off feels invisible. At higher volumes, the database is often the first place where a “clean” abstraction starts charging interest.

The sudden appearance of N+1 queries

N+1 happens when you load a list of parent records (1 query) and then, inside a loop, load related records for each parent (N more queries). In local testing it looks fine—maybe N is 20. In production, N becomes 2,000, and your app quietly turns one request into thousands of round trips.

The tricky part is that nothing “breaks” immediately; latency creeps up, connection pools fill, and retries multiply the load.

Over-fetching, missing indexes, and expensive joins

Abstractions often encourage fetching full objects by default, even when you only need two fields. That increases I/O, memory, and network transfer.

At the same time, ORMs can generate queries that skip the indexes you assumed were being used (or that never existed). A single missing index can turn a selective lookup into a table scan.

Joins are another hidden cost: what reads as “just include the relation” can become a multi-join query with large intermediate results.

Connection pools and transaction contention

Under load, database connections are a scarce resource. If each request fans out into multiple queries, the pool hits its limit quickly and your app starts queueing.

Long transactions (sometimes accidental) can also cause contention—locks last longer, and concurrency collapses.

Mitigations that scale better

- Use eager loading for known relationships, but be deliberate: fetch only what you need.

- Shape queries: select specific columns, add pagination, and avoid unbounded “load all” patterns.

- Batch operations where possible (bulk inserts/updates) to reduce per-row overhead.

- For read-heavy systems, introduce read replicas and route safe queries to them.

- Validate ORM-generated SQL with explain plans, and treat indexes as part of application design—not a DBA afterthought.

Concurrency Models and Backpressure

Concurrency is where abstractions can feel “safe” in development and then fail loudly under load. A framework’s default model often hides the real constraint: you’re not just serving requests—you’re managing contention for CPU, threads, sockets, and downstream capacity.

Thread-per-request vs async: different failure shapes

Thread-per-request (common in classic web stacks) is simple: each request gets a worker thread. It breaks when slow I/O (database, API calls) causes threads to pile up. Once the thread pool is exhausted, new requests queue, latency spikes, and eventually you hit timeouts—while the server is still “busy” doing nothing but waiting.

Async/event-loop models handle many in-flight requests with fewer threads, so they’re great at high concurrency. They break differently: one blocking call (a sync library, slow JSON parsing, heavy logging) can stall the event loop, turning “one slow request” into “everything slow.” Async also makes it easy to create too much concurrency, overwhelming a dependency faster than thread limits would.

Backpressure: the missing contract

Backpressure is the system telling callers, “slow down; I can’t safely accept more.” Without it, a slow dependency (database, payment provider) doesn’t just slow responses—it increases in-flight work, memory usage, and queue lengths. That extra work makes the dependency even slower, creating a feedback loop.

Timeouts and retry storms

Timeouts must be explicit and layered: client, service, and dependency. If timeouts are too long, queues grow and recovery takes longer. If retries are automatic and aggressive, you can trigger a retry storm: a dependency slows, calls time out, callers retry, load multiplies, and the dependency collapses.

Mitigations that scale

- Use bulkheads to isolate resources (separate thread pools/connection pools per dependency), so one slow component can’t consume everything.

- Add circuit breakers to stop calling a failing dependency and give it time to recover.

- Implement request shedding (fail fast with a clear error) when queues exceed safe limits—better to drop some traffic than to make all traffic time out unpredictably.

Networking and Middleware Overhead

Build a test service

Generate a Go plus PostgreSQL service to isolate your slow path under load.

Frameworks make networking feel like “just calling an endpoint.” Under load, that abstraction often leaks through the invisible work done by middleware stacks, serialization, and payload handling.

The per-hop tax of “simple” middleware

Each layer—API gateway, auth middleware, rate limiting, request validation, observability hooks, retries—adds a little time. One extra millisecond rarely matters in development; at scale, a handful of middleware hops can turn a 20 ms request into 60–100 ms, especially when queues form.

The key is that latency doesn’t just add—it amplifies. Small delays increase concurrency (more in-flight requests), which increases contention (thread pools, connection pools), which increases delays again.

Serialization costs and payload size surprises

JSON is convenient, but encoding/decoding large payloads can dominate CPU. The leak shows up as “network” slowness that’s actually application CPU time, plus extra memory churn from allocating buffers.

Large payloads also slow everything around them:

- More time in transit and more time copying between buffers

- More GC pressure in managed runtimes

- Longer tail latencies when a few big responses block shared resources

Headers, compression, and streaming vs buffering

Headers can quietly bloat requests (cookies, auth tokens, tracing headers). That bloat gets multiplied across every call and every hop.

Compression is another tradeoff. It can save bandwidth, but it costs CPU and can add latency—especially when you compress small payloads or compress multiple times through proxies.

Finally, streaming vs buffering matters. Many frameworks buffer entire request/response bodies by default (to enable retries, logging, or content-length calculation). That’s convenient, but at high volume it increases memory usage and creates head-of-line blocking. Streaming helps keep memory predictable and reduces time-to-first-byte, but it requires more careful error handling.

Practical mitigations

Treat payload size and middleware depth as budgets, not afterthoughts:

- Set payload and header budgets; enforce them with limits and warnings.

- Prefer pagination and partial responses over “return everything” endpoints.

- Stream large uploads/downloads; avoid logging full bodies.

- Use binary formats (e.g., Protobuf) where latency/CPU is critical.

- Compress selectively (size thresholds, one place in the chain).

When scale exposes networking overhead, the fix is often less “optimize the network” and more “stop doing hidden work on every request.”

Caching: When the “Easy” Fix Creates New Failure Modes

Caching is often treated like a simple switch: add Redis (or a CDN), watch latency drop, move on. Under real load, caching is an abstraction that can leak badly—because it changes where work happens, when it happens, and how failures propagate.

Caching isn’t a free speed boost

A cache adds extra network hops, serialization, and operational complexity. It also introduces a second “source of truth” that can be stale, partially filled, or unavailable. When things go wrong, the system doesn’t just get slower—it can behave differently (serving old data, amplifying retries, or overloading the database).

Common failure modes: stampedes, keys, and invalidation

Cache stampedes happen when many requests miss the cache at once (often after an expiry) and all rush to rebuild the same value. At scale, this can turn a small miss rate into a database spike.

Poor key design is another silent issue. If keys are too broad (e.g., user:feed without including parameters), you serve incorrect data. If keys are too specific (including timestamps, random IDs, or unordered query params), you get near-zero hit rates and pay the overhead for nothing.

Invalidation is the classic trap: updating the database is easy; ensuring every related cached view is refreshed is not. Partial invalidation leads to confusing “it’s fixed for me” bugs and inconsistent reads.

Hot keys and uneven traffic

Real traffic isn’t evenly distributed. A celebrity profile, a popular product, or a shared config endpoint can become a hot key, concentrating load on a single cache entry and its backing store. Even if average performance looks fine, tail latency and node-level pressure can explode.

Mitigations that work in practice

- Use TTL jitter so expirations don’t align.

- Add request coalescing (single-flight) so only one request rebuilds a missing key while others wait.

- Consider tiered caches (in-process LRU + shared cache) to reduce network overhead and protect Redis.

- Apply rate limits and circuit breakers around cache-miss paths so a cache incident doesn’t immediately become a database incident.

Memory, Garbage Collection, and Resource Leaks

Share a live repro

Deploy and host a reproducible benchmark environment your team can share.

Frameworks often make memory feel “managed,” which is comforting—until traffic climbs and latency starts spiking in ways that don’t match CPU graphs. Many defaults are tuned for developer convenience, not for long-running processes under sustained load.

How defaults hide memory growth and GC pauses

High-level frameworks routinely allocate short-lived objects per request: request/response wrappers, middleware context objects, JSON trees, regex matchers, and temporary strings. Individually, these are small. At scale, they create constant allocation pressure, pushing the runtime to run garbage collection (GC) more often.

GC pauses can become visible as brief but frequent latency spikes. As heaps grow, the pauses often get longer—not necessarily because you leaked, but because the runtime needs more time to scan and compact memory.

Allocation patterns, large heaps, and fragmentation

Under load, a service may promote objects into older generations (or similar long-lived regions) simply because they survived a few GC cycles while waiting in queues, buffers, connection pools, or in-flight requests. This can bloat the heap even if the application is “correct.”

Fragmentation is another hidden cost: memory can be free but not reusable for the sizes you need, so the process keeps asking the OS for more.

Leak vs. high-but-stable memory

A true leak is unbounded growth over time: memory rises, never returns, and eventually triggers OOM kills or extreme GC thrash. High-but-stable usage is different: memory climbs to a plateau after warm-up, then stays roughly flat.

Mitigations that don’t backfire

Start with profiling (heap snapshots, allocation flame graphs) to find hot allocation paths and retained objects.

Be cautious with pooling: it can reduce allocations, but a poorly sized pool can pin memory and worsen fragmentation. Prefer reducing allocations first (streaming instead of buffering, avoiding unnecessary object creation, limiting per-request caching), then add pooling only where measurements show clear wins.

Observability Leaks: Logging, Metrics, and Tracing at Volume

Observability tools often feel “free” because the framework gives you convenient defaults: request logs, auto-instrumented metrics, and one-line tracing. Under real traffic, those defaults can become part of the workload you’re trying to observe.

When observability becomes the bottleneck

Per-request logging is the classic example. A single line per request looks harmless—until you hit thousands of requests per second. Then you’re paying for string formatting, JSON encoding, disk or network writes, and downstream ingestion. The leak shows up as higher tail latency, CPU spikes, log pipelines falling behind, and sometimes request timeouts caused by synchronous log flushing.

Metrics can overload systems in a quieter way. Counters and histograms are cheap when you have a small number of time series. But frameworks often encourage adding tags/labels like user_id, email, path, or order_id. That leads to cardinality explosions: instead of one metric, you’ve created millions of unique series. The result is bloated memory usage in the metrics client and the backend, slow queries in dashboards, dropped samples, and surprise bills.

Tracing: visibility with a price tag

Distributed tracing adds storage and compute overhead that grows with traffic and with the number of spans per request. If you trace everything by default, you may pay twice: once in app overhead (creating spans, propagating context) and again in the tracing backend (ingestion, indexing, retention).

Sampling is how teams regain control—but it’s easy to do wrong. Sampling too aggressively hides rare failures; sampling too little makes tracing cost-prohibitive. A practical approach is to sample more for errors and high-latency requests, and less for healthy fast paths.

If you want a baseline for what to collect (and what to avoid), see /blog/observability-basics.

What to do when you see the leak

Treat observability as production traffic: set budgets (log volume, metric series count, trace ingestion), review tags for cardinality risk, and load-test with instrumentation enabled. The goal isn’t “less observability”—it’s observability that still works when your system is under pressure.

Distributed Systems: Where “Simple” Becomes Coupling

Frameworks often make calling another service feel like calling a local function: userService.getUser(id) returns quickly, errors are “just exceptions,” and retries look harmless. At small scale, that illusion holds. At large scale, the abstraction leaks because every “simple” call carries hidden coupling: latency, capacity limits, partial failures, and version mismatches.

Hidden coupling across services

A remote call couples two teams’ release cycles, data models, and uptime. If Service A assumes Service B is always available and fast, A’s behavior is no longer defined by its own code—it’s defined by B’s worst day. This is how systems become tightly bound even when the code looks modular.

Transactions, consistency, and idempotency

Distributed transactions are a common trap: what looked like “save user, then charge card” becomes a multi-step workflow across databases and services. Two-phase commit rarely stays simple in production, so many systems switch to eventual consistency (e.g., “payment will be confirmed shortly”). That shift forces you to design for retries, duplicates, and out-of-order events.

Idempotency becomes essential: if a request is retried due to a timeout, it must not create a second charge or a second shipment. Framework-level retry helpers can amplify problems unless your endpoints are explicitly safe to repeat.

Failure propagation

One slow dependency can exhaust thread pools, connection pools, or queues, creating a ripple effect: timeouts trigger retries, retries increase load, and soon unrelated endpoints degrade. “Just add more instances” may worsen the storm if everyone retries at once.

Mitigations that keep coupling explicit

Define clear contracts (schemas, error codes, and versioning), set timeouts and budgets per call, and implement fallbacks (cached reads, degraded responses) where appropriate.

Finally, set SLOs per dependency and enforce them: if Service B can’t meet its SLO, Service A should fail fast or degrade gracefully rather than silently dragging the whole system down.

How to Diagnose Leaks Without Guesswork

Test failure modes early

Prototype cache, timeout, and retry changes as isolated branches you can compare.

When an abstraction leaks at scale, it often shows up as a vague symptom (timeouts, CPU spikes, slow queries) that tempts teams into premature rewrites. A better approach is to turn the hunch into evidence.

A practical, step-by-step workflow

1) Reproduce (make it fail on demand).

Capture the smallest scenario that still triggers the problem: the endpoint, background job, or user flow. Reproduce it locally or in staging with production-like configuration (feature flags, timeouts, connection pools).

2) Measure (pick two or three signals).

Choose a few metrics that tell you where time and resources go: p95/p99 latency, error rate, CPU, memory, GC time, DB query time, queue depth. Avoid adding dozens of new graphs mid-incident.

3) Isolate (narrow the suspect).

Use tooling to separate “framework overhead” from “your code”:

- Profilers (CPU, memory, allocation) to find hot paths and churn

- Tracing (OpenTelemetry, vendor APM) to see time per hop and call depth

- DB query planner / EXPLAIN to validate ORM-generated SQL and index usage

- Load tests (k6, Gatling, Locust) to reproduce under controlled pressure

4) Confirm (prove cause and effect).

Change one variable at a time: bypass the ORM for one query, disable a middleware, reduce log volume, cap concurrency, or alter pool sizes. If the symptom moves predictably, you’ve found the leak.

Stress test like production, not like a demo

Use realistic data sizes (row counts, payload sizes) and realistic concurrency (bursts, long tails, slow clients). Many leaks only appear when caches are cold, tables are large, or retries amplify load.

“Before you rewrite” checklist

- Can you reproduce it with a load test and capture a trace?

- Do you have a profiler snapshot showing the top consumers?

- Have you inspected the worst queries with the query planner?

- Have you tried a small, reversible change that isolates the layer?

- Can you quantify improvement (p95/p99, cost, error rate) after the fix?

Mitigation Strategies and When to Drop Down a Level

Abstraction leaks aren’t a moral failure of a framework—they’re a signal that your system’s needs have outgrown the “default path.” The goal isn’t to abandon frameworks, but to be deliberate about when you tune them and when you bypass them.

Tune the framework first (when it’s still doing the right job)

Stay within the framework when the issue is configuration or usage rather than a fundamental mismatch. Good candidates:

- A slow endpoint that improves with better indexes, query shaping, and connection pool settings

- Excessive logging that can be fixed with sampling, log levels, and structured fields

- Thread/worker starvation that improves with concurrency limits and timeouts

If you can fix it by tightening settings and adding guardrails, you keep upgrades easy and reduce “special cases.”

Use escape hatches (when you need precision)

Most mature frameworks provide ways to step outside the abstraction without rewriting everything. Common patterns:

- Escape hatches: raw SQL for one hot query, direct HTTP client settings, custom serialization for one payload

- Thin adapters: a small wrapper around a framework component so you can swap implementations later

- Boundary layers: keep the framework at the edges (routing, auth), but isolate core business logic behind clear interfaces

This keeps the framework as a tool, not a dependency that dictates architecture.

Operational practices that prevent “fixes” from becoming risks

Mitigation is as much operational as it is code:

- Capacity planning: define budgets (p95 latency, CPU, DB time) and track them per release

- Canaries and safe rollouts: roll out to a small slice first, compare error rates/latency, then expand

- Load testing that matches reality: include peak traffic patterns, retries, and downstream slowness

For related rollout practices, see /blog/canary-releases.

A simple decision framework

Drop down a level when (1) the issue hits a critical path, (2) you can measure the win, and (3) the change won’t create a long-term maintenance tax your team can’t afford. If only one person understands the bypass, it’s not “fixed”—it’s fragile.

Where Koder.ai fits in (without adding more abstractions you can’t see)

When you’re hunting leaks, speed matters—but so does keeping changes reversible. Teams often use Koder.ai to spin up small, isolated reproductions of production issues (a minimal React UI, a Go service, a PostgreSQL schema, and a load-test harness) without burning days on scaffolding. Its planning mode helps document what you’re changing and why, while snapshots and rollback make it safer to try “drop down a level” experiments (like swapping one ORM query for raw SQL) and then revert cleanly if the data doesn’t support it.

If you’re doing this work across environments, Koder.ai’s built-in deployment/hosting and exportable source code can also help keep the diagnosis artifacts (benchmarks, repro apps, internal dashboards) as real software—versioned, shareable, and not stuck in someone’s local folder.

FAQ

What is an “abstraction leak” in practical terms?

A leaky abstraction is a layer that tries to hide complexity (ORMs, retry helpers, caching wrappers, middleware), but under load the hidden details start changing outcomes.

Practically, it’s when your “simple mental model” stops predicting real behavior, and you’re forced to understand things like query plans, connection pools, queue depth, GC, timeouts, and retries.

Why do abstraction leaks stay invisible early on?

Early systems have spare capacity: small tables, low concurrency, warm caches, and few failure interactions.

As volume grows, tiny overheads become steady bottlenecks, and rare edge cases (timeouts, partial failures) become normal. That’s when the hidden costs and limits of the abstraction show up in production behavior.

What are the most common signs that an abstraction is leaking?

Look for patterns that don’t improve predictably when you add resources:

- p95/p99 latency grows non-linearly while averages look fine

- Timeouts only during peak/bursty traffic

- Queues/backlogs rising (jobs, consumers, thread pools)

- Throughput ceilings (more instances, little RPS gain)

- “Mystery” cost spikes in DB/cache/network without a clear feature change

How can I tell “abstraction leak” vs. just underprovisioning?

Underprovisioning usually improves roughly linearly when you add capacity.

A leak often shows:

- Extra work being generated (N+1 queries, chatty calls, heavy serialization/logging)

- A single dependency becomes the limiter (DB, cache, external API)

- Long-tail latency and queueing dominate even when app CPU looks moderate

Use the checklist in the post: if doubling resources doesn’t fix it proportionally, suspect a leak.

Why do ORMs become a problem at scale, and what should I do first?

ORMs can hide the fact that each object operation becomes SQL. Common leaks include:

- N+1 queries (one request becomes hundreds/thousands of round trips)

- Over-fetching full rows/relations when you need a few fields

- Missing/unused indexes leading to scans

- Surprise expensive joins from “include relation” helpers

Mitigate with eager loading (carefully), selecting only needed columns, pagination, batching, and validating generated SQL with EXPLAIN.

What role do connection pools and transaction length play in leaks?

Connection pools cap concurrency to protect the DB, but hidden query proliferation can exhaust the pool.

When the pool is full, requests queue in the app, increasing latency and holding resources longer. Long transactions worsen it by holding locks and reducing effective concurrency.

Practical fixes:

- Reduce queries per request (fix N+1, batch)

- Shorten transactions and avoid accidental long-lived transactions

- Size pools intentionally and monitor wait time, not just pool size

How do thread-per-request and async models leak differently under load?

Thread-per-request fails by running out of threads when I/O is slow; everything queues and timeouts spike.

Async/event-loop fails when:

- A blocking call stalls the loop, slowing everything

- You create too much concurrency and overwhelm dependencies

Either way, the “framework handles concurrency” abstraction leaks into needing explicit limits, timeouts, and backpressure.

What is backpressure and why does it matter for preventing cascades?

Backpressure is a mechanism for saying “slow down” when a component can’t safely accept more work.

Without it, slow dependencies increase in-flight requests, memory use, and queue length—making the dependency even slower (a feedback loop).

Common tools:

- Concurrency limits per dependency

- Bounded queues

- Request shedding (fail fast)

- Bulkheads (isolate resources so one dependency can’t consume everything)

Why do retries cause “retry storms,” and how can I avoid them?

Automatic retries can turn a slowdown into an outage:

- Dependency slows → calls time out

- Callers retry → load multiplies

- Dependency collapses → more timeouts → more retries

Mitigate with:

How can logging/metrics/tracing become an abstraction leak at scale?

Instrumentation does real work at high traffic:

- Logging: formatting + encoding + I/O + ingestion can hit CPU/latency and create pipeline backpressure

- Metrics: high-cardinality labels (e.g.,

user_id,email,order_id) can explode time series count and cost - Tracing: span creation and backend ingestion scale with traffic and span count

Practical controls: