Apr 22, 2025·8 min

Samsung SDS and Scaling Enterprise IT Where Uptime Is the Product

A practical look at how Samsung SDS-style enterprise platforms scale in partner ecosystems where uptime, change control, and trust are the product.

A practical look at how Samsung SDS-style enterprise platforms scale in partner ecosystems where uptime, change control, and trust are the product.

When an enterprise depends on shared platforms to run finance, manufacturing, logistics, HR, and customer channels, uptime stops being a “nice-to-have” quality attribute. It becomes the thing being sold. For an organization like Samsung SDS—operating as a large-scale enterprise IT services and platform provider—reliability isn’t just a feature of the service; it is the service.

In consumer apps, a brief outage might be annoying. In enterprise ecosystems, it can pause revenue recognition, delay shipments, break compliance reporting, or trigger contractual penalties. “Reliability is the product” means success is judged less by new features and more by outcomes like:

It also means engineering and operations aren’t separate “phases.” They’re part of the same promise: customers and internal stakeholders expect systems to work—consistently, measurably, and under stress.

Enterprise reliability is rarely about a single application. It’s about a network of dependencies across:

This interconnectedness increases the blast radius of failures: one degraded service can cascade into dozens of downstream systems and external obligations.

This post focuses on examples and repeatable patterns—not internal or proprietary specifics. You’ll learn how enterprises approach reliability through an operating model (who owns what), platform decisions (standardization that still supports delivery speed), and metrics (SLOs, incident performance, and business-aligned targets).

By the end, you should be able to map the same ideas to your own environment—whether you run a central IT organization, a shared services team, or a platform group supporting an ecosystem of dependent businesses.

Samsung SDS is widely associated with running and modernizing complex enterprise IT: the systems that keep large organizations operating day after day. Rather than focusing on a single app or product line, its work sits closer to the “plumbing” of the enterprise—platforms, integration, operations, and the services that make business-critical workflows dependable.

In practice, this usually spans several categories that many large companies need at the same time:

Scale isn’t only about traffic volume. Inside conglomerates and large partner networks, scale is about breadth: many business units, different compliance regimes, multiple geographies, and a mix of modern cloud services alongside legacy systems that still matter.

That breadth creates a different operating reality:

The hardest constraint is dependency coupling. When core platforms are shared—identity, network, data pipelines, ERP, integration middleware—small issues can ripple outward. A slow authentication service can look like “the app is down.” A data pipeline delay can halt reporting, forecasting, or compliance submissions.

This is why enterprise providers like Samsung SDS are often judged less by features and more by outcomes: how consistently shared systems keep thousands of downstream workflows running.

Enterprise platforms rarely fail in isolation. In a Samsung SDS–style ecosystem, a “small” outage inside one service can ripple across suppliers, logistics partners, internal business units, and customer-facing channels—because everyone is leaning on the same set of shared dependencies.

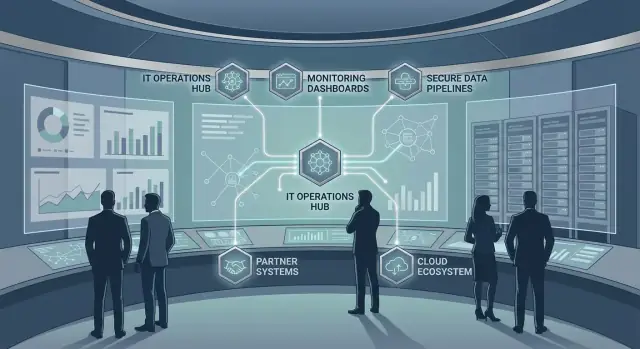

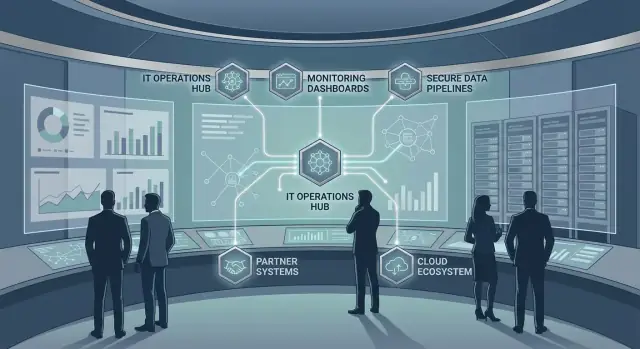

Most enterprise journeys traverse a familiar chain of ecosystem components:

When any one of these degrades, it can block multiple “happy paths” at once—checkout, shipment creation, returns, invoicing, or partner onboarding.

Ecosystems integrate through different “pipes,” each with its own failure pattern:

A key risk is correlated failure: multiple partners depend on the same endpoint, the same identity provider, or the same shared data set—so one fault becomes many incidents.

Ecosystems introduce problems you don’t see in single-company systems:

Reducing blast radius starts with explicitly mapping dependencies and partner journeys, then designing integrations that degrade gracefully rather than fail all at once (see also /blog/reliability-targets-slos-error-budgets).

Standardization only helps if it makes teams faster. In large enterprise ecosystems, platform foundations succeed when they remove repeated decisions (and repeated mistakes) while still giving product teams room to ship.

A practical way to think about the platform is as clear layers, each with a distinct contract:

This separation keeps “enterprise-grade” requirements (security, availability, auditability) built into the platform rather than re-implemented by every application.

Golden paths are approved templates and workflows that make the secure, reliable option the easiest option: a standard service skeleton, preconfigured pipelines, default dashboards, and known-good stacks. Teams can deviate when needed, but they do so intentionally, with explicit ownership for the extra complexity.

A growing pattern is to treat these golden paths as productized starter kits—including scaffolding, environment creation, and “day-2” defaults (health checks, dashboards, alert rules). In platforms like Koder.ai, teams can go a step further by generating a working app through a chat-driven workflow, then using planning mode, snapshots, and rollback to keep changes reversible while still moving quickly. The point isn’t the tooling brand—it’s making the reliable path the lowest-friction path.

Multi-tenant platforms reduce cost and speed onboarding, but they require strong guardrails (quotas, noisy-neighbor controls, clear data boundaries). Dedicated environments cost more, yet can simplify compliance, performance isolation, and customer-specific change windows.

Good platform choices shrink the daily decision surface: fewer “Which logging library?”, “How do we rotate secrets?”, “What’s the deployment pattern?” conversations. Teams focus on business logic while the platform quietly enforces consistency—and that’s how standardization increases delivery speed instead of slowing it.

Enterprise IT providers don’t “do reliability” as a nice-to-have—reliability is part of what customers buy. The practical way to make that real is to translate expectations into measurable targets that everyone can understand and manage.

An SLI (Service Level Indicator) is a measurement (for example: “percentage of checkout transactions that succeeded”). An SLO (Service Level Objective) is the target for that measurement (for example: “99.9% of checkout transactions succeed each month”).

Why it matters: contracts and business operations depend on clear definitions. Without them, teams argue after an incident about what “good” looked like. With them, you can align service delivery, support, and partner dependencies around the same scoreboard.

Not every service should be judged only by uptime. Common enterprise-relevant targets include:

For data platforms, “99.9% uptime” can still mean a failed month if key datasets are late, incomplete, or wrong. Choosing the right indicators prevents false confidence.

An error budget is the allowed amount of “badness” (downtime, failed requests, delayed pipelines) implied by the SLO. It turns reliability into a decision tool:

This helps enterprise providers balance delivery commitments with uptime expectations—without relying on opinion or hierarchy.

Effective reporting is tailored:

The goal isn’t more dashboards—it’s consistent, contract-aligned visibility into whether reliability outcomes support the business.

When uptime is part of what customers buy, observability can’t be an afterthought or a “tooling team” project. At enterprise scale—especially in ecosystems with partners and shared platforms—good incident response starts with seeing the system the same way operators experience it: end-to-end.

High-performing teams treat logs, metrics, traces, and synthetic checks as one coherent system:

The goal is quick answers to: “Is this user-impacting?”, “How big is the blast radius?”, and “What changed recently?”

Enterprise environments generate endless signals. The difference between usable and unusable alerting is whether alerts are tied to customer-facing symptoms and clear thresholds. Prefer alerts on SLO-style indicators (error rate, p95 latency) over internal counters. Every page should include: affected service, probable impact, top dependencies, and a first diagnostic step.

Ecosystems fail at the seams. Maintain service maps that show dependencies—internal platforms, vendors, identity providers, networks—and make them visible in dashboards and incident channels. Even if partner telemetry is limited, you can still model dependencies using synthetic checks, edge metrics, and shared request IDs.

Automate repetitive actions that reduce time-to-mitigate (rollback, feature flag disable, traffic shift). Document decisions that require judgment (customer comms, escalation paths, partner coordination). A good runbook is short, tested during real incidents, and updated as part of post-incident follow-up—not filed away.

Enterprise environments like Samsung SDS-supported ecosystems don’t get to choose between “safe” and “fast.” The trick is to make change control a predictable system: low-risk changes flow quickly, while high-risk changes get the scrutiny they deserve.

Big-bang releases create big-bang outages. Teams keep uptime high by shipping in smaller slices and reducing the number of things that can go wrong at once.

Feature flags help separate “deploy” from “release,” so code can reach production without immediately affecting users. Canary deploys (releasing to a small subset first) provide an early warning before a change reaches every business unit, partner integration, or region.

Release governance isn’t only paperwork—it’s how enterprises protect critical services and prove control.

A practical model includes:

The goal is to make the “right way” the easiest way: approvals and evidence are captured as part of normal delivery, not assembled after the fact.

Ecosystems have predictable stress points: end-of-month finance close, peak retail events, annual enrollment, or major partner cutovers. Change windows align deployments with those cycles.

Blackout periods should be explicit and published, so teams plan ahead rather than rushing risky work into the last day before a freeze.

Not every change can be rolled back cleanly—especially schema changes or cross-company integrations. Strong change control requires deciding upfront:

When teams predefine these paths, incidents become controlled corrections instead of prolonged improvisation.

Resilience engineering starts with a simple assumption: something will break—an upstream API, a network segment, a database node, or a third‑party dependency you don’t control. In enterprise ecosystems (where Samsung SDS-type providers operate across many business units and partners), the goal isn’t “no failures,” but controlled failures with predictable recovery.

A few patterns consistently pay off at scale:

The key is to define which user journeys are “must survive” and design fallbacks specifically for them.

Disaster recovery planning becomes practical when every system has explicit targets:

Not everything needs the same numbers. A customer authentication service may require minutes of RTO and near-zero RPO, while an internal analytics pipeline can tolerate hours. Matching RTO/RPO to business impact prevents overspending while still protecting what matters.

For critical workflows, replication choices matter. Synchronous replication can minimize data loss but may increase latency or reduce availability during network issues. Asynchronous replication improves performance and uptime but risks losing the most recent writes. Good designs make these trade-offs explicit and add compensating controls (idempotency, reconciliation jobs, or clear “pending” states).

Resilience only counts if it’s exercised:

Run them regularly, track time-to-recover, and feed findings back into platform standards and service ownership.

Security failures and compliance gaps don’t just create risk—they create downtime. In enterprise ecosystems, one misconfigured account, unpatched server, or missing audit trail can trigger service freezes, emergency changes, and customer-impacting outages. Treating security and compliance as part of reliability makes “staying up” the shared goal.

When multiple subsidiaries, partners, and vendors connect to the same services, identity becomes a reliability control. SSO and federation reduce password sprawl and help users get access without risky workarounds. Just as important is least privilege: access should be time-bound, role-based, and regularly reviewed so a compromised account can’t take down core systems.

Security operations can either prevent incidents—or create them through unplanned disruption. Tie security work to operational reliability by making it predictable:

Compliance requirements (retention, privacy, audit trails) are easiest to meet when designed into platforms. Centralized logging with consistent fields, enforced retention policies, and access-controlled exports keep audits from turning into fire drills—and avoid “freeze the system” moments that interrupt delivery.

Partner integrations expand capability and blast radius. Reduce third-party risk with contractually defined security baselines, versioned APIs, clear data-handling rules, and continuous monitoring of dependency health. If a partner fails, your systems should degrade gracefully rather than fail unpredictably.

When enterprises talk about uptime, they often mean applications and networks. But for many ecosystem workflows—billing, fulfillment, risk, and reporting—data correctness is just as operationally critical. A “successful” batch that publishes the wrong customer identifier can create hours of downstream incidents across partners.

Master data (customers, products, vendors) is the reference point everything else depends on. Treating it as a reliability surface means defining what “good” looks like (completeness, uniqueness, timeliness) and measuring it continuously.

A practical approach is to track a small set of business-facing quality indicators (for example, “% of orders mapped to a valid customer”) and alert when they drift—before downstream systems fail.

Batch pipelines are great for predictable reporting windows; streaming is better for near-real-time operations. At scale, both need guardrails:

Trust increases when teams can answer three questions quickly: Where did this field come from? Who uses it? Who approves changes?

Lineage and cataloging aren’t “documentation projects”—they’re operational tools. Pair them with clear stewardship: named owners for critical datasets, defined access policies, and lightweight reviews for high-impact changes.

Ecosystems fail at the boundaries. Reduce partner-related incidents with data contracts: versioned schemas, validation rules, and compatibility expectations. Validate at ingest, quarantine bad records, and publish clear error feedback so issues are corrected at the source rather than patched downstream.

Reliability at enterprise scale fails most often in the gaps: between teams, between vendors, and between “run” and “build.” Governance isn’t bureaucracy for its own sake—it’s how you make ownership explicit so incidents don’t turn into multi-hour debates about who should act.

There are two common models:

Many enterprises land on a hybrid: platform teams provide paved roads, while product teams own reliability for what they ship.

A reliable organization publishes a service catalog that answers: Who owns this service? What are the support hours? What dependencies are critical? What is the escalation path?

Equally important are ownership boundaries: which team owns the database, the integration middleware, identity, network rules, and monitoring. When boundaries are unclear, incidents become coordination problems rather than technical problems.

In ecosystem-heavy environments, reliability depends on contracts. Use SLAs for customer-facing commitments, OLAs for internal handoffs, and integration contracts that specify versioning, rate limits, change windows, and rollback expectations—so partners can’t unintentionally break you.

Governance should enforce learning:

Done well, governance turns reliability from “everyone’s job” into a measurable, owned system.

You don’t need to “become Samsung SDS” to benefit from the same operating principles. The goal is to turn reliability into a managed capability: visible, measured, and improved in small, repeatable steps.

Start with a service inventory that’s good enough to use next week, not perfect.

This becomes the backbone for prioritization, incident response, and change control.

Choose 2–4 high-impact SLOs across different risk areas (availability, latency, freshness, correctness). Examples:

Track error budgets and use them to decide when to pause feature work, reduce change volume, or invest in fixes.

Tool sprawl often hides basic gaps. First, standardize what “good visibility” means:

If you can’t answer “what broke, where, and who owns it?” within minutes, add clarity before adding vendors.

Ecosystems fail at the seams. Publish partner-facing guidelines that reduce variability:

Treat integration standards as a product: documented, reviewed, and updated.

Run a 30-day pilot on 3–5 services, then expand. For more templates and examples, see /blog.

If you’re modernizing how teams build and operate services, it can help to standardize not only runtime and observability, but also the creation workflow. Platforms like Koder.ai (a chat-driven “vibe-coding” platform) can accelerate delivery while keeping enterprise controls in view—e.g., using planning mode before generating changes, and relying on snapshots/rollback when experimenting. If you’re evaluating managed support or platform help, start with constraints and outcomes on /pricing (no promises—just a way to frame options).

It means stakeholders experience reliability itself as the core value: business processes complete on time, integrations stay healthy, performance is predictable at peak, and recovery is fast when something breaks. In enterprise ecosystems, even short degradation can halt billing, shipping, payroll, or compliance reporting—so reliability becomes the primary “deliverable,” not an attribute behind the scenes.

Because enterprise workflows are tightly coupled to shared platforms (identity, ERP, data pipelines, integration middleware). A small outage can cascade into blocked orders, delayed finance close, broken partner onboarding, or contractual penalties. The “blast radius” is usually much larger than the failing component.

Common shared dependencies include:

If any of these degrade, many downstream apps can look “down” simultaneously even if they’re healthy.

Use a “good enough” inventory and map dependencies:

This becomes the basis for prioritizing SLOs, alerting, and change controls.

Pick a small set of indicators tied to outcomes, not just uptime:

Start with 2–4 SLOs the business recognizes and expand once teams trust the measurements.

An error budget is the allowed “badness” implied by an SLO (failed requests, downtime, late data). Use it as a policy:

This turns reliability trade-offs into an explicit decision rule rather than escalation-by-opinion.

A practical layered approach is:

This pushes enterprise-grade requirements into the platform so every app team doesn’t re-invent reliability controls.

Golden paths are paved-road templates: standard service skeletons, pipelines, default dashboards, and known-good stacks. They help because:

They’re most effective when treated like a product: maintained, versioned, and improved from incident learnings.

Ecosystems often need different isolation levels:

Choose based on risk: put the highest compliance/performance sensitivity into dedicated setups, and use multi-tenant for workloads that can tolerate shared capacity with guardrails.

Prioritize end-to-end visibility and coordination:

If partner telemetry is limited, add synthetic checks at the seams and correlate with shared request IDs where possible.